Abstract

Code Contents

The public repository at github.com/ProSDD/codes contains everything needed to reproduce our results and run ProSDD on new data.

ProSDD Checkpoints

Pretrained ProSDD checkpoints under both training settings: ASVspoof 2019 LA and ASVspoof 2024.

Baseline Checkpoints

We also release pretrained checkpoints for RawNet2, AASIST, and XLSR-SLS trained on ASVspoof 2024, to help the community make better use of 2024-trained models.

Training & Inference Scripts

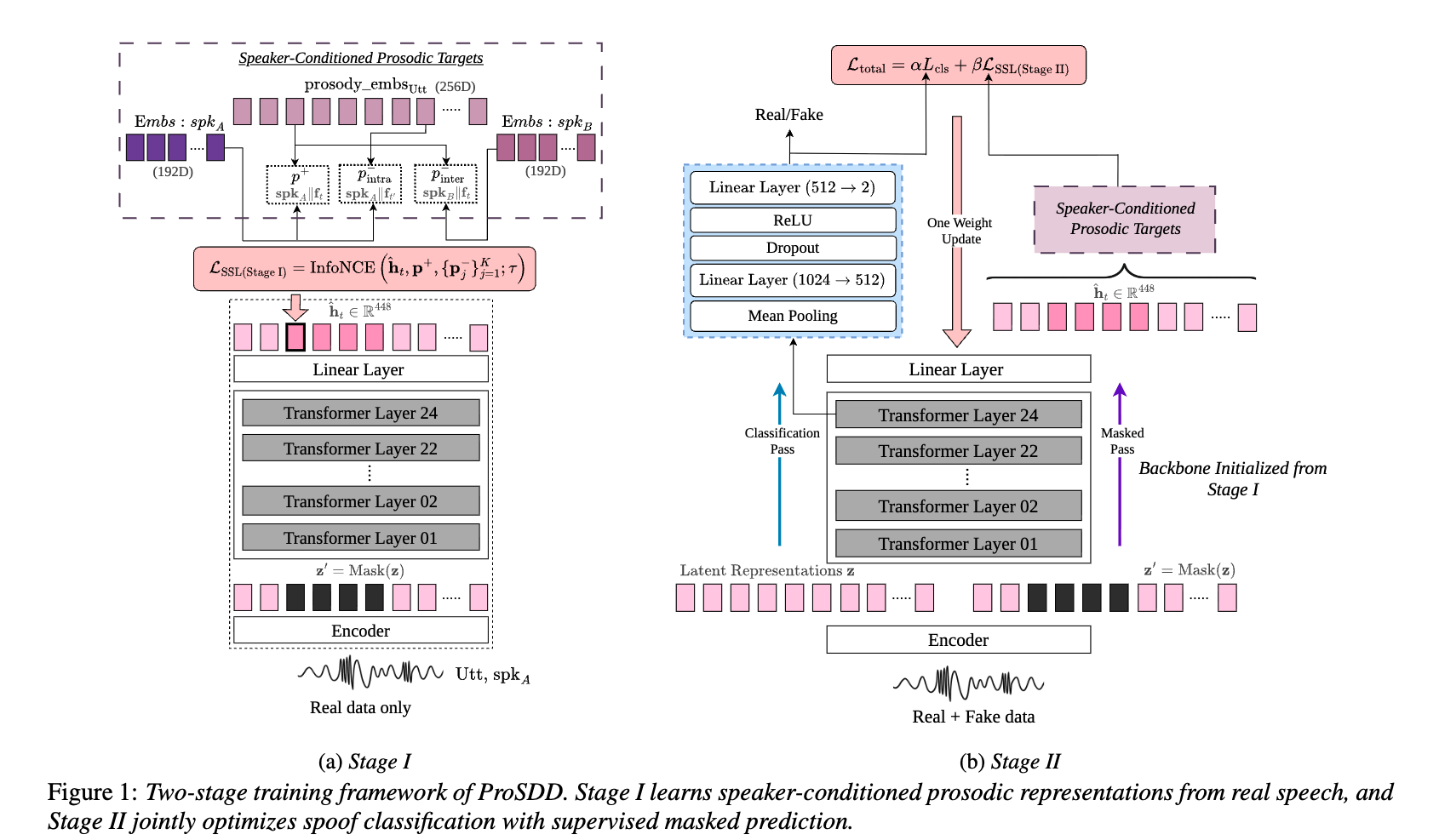

Full two-stage ProSDD training pipeline and inference scripts.

Supervised Targets

Extracted speaker embeddings (ECAPA-TDNN) used as supervised targets in Stage I and Stage II for the LibriSpeech, ASVspoof 2019, and ASVspoof 2024 train and dev sets are provided. Since the prosody files are large, we provide the code used to extract the frame-level prosody embeddings.

Reproducibility Notes

Hyperparameter configs, and training details provided in codes to help recreate all results reported in the paper.

Score Files

All score files to compute EER for ProSDD under both training settings are available, along with baseline scores trained on the ASVspoof 2024 setting.

We provide baseline scores trained on 2024 to facilitate use of this recent dataset by the community.

Results & Analysis

We report Equal Error Rate (EER ↓) across all benchmarks. ProSDD consistently outperforms all baselines on both standard and emotional/expressive datasets under both training settings.

Dataset names in the column headers are linked to their respective download pages.

(a) Trained on ASVspoof 2019 LA

| Model | ASV 2019 | ASV 2021 | ASV 2024 | EmoFake | EmoSpoof |

|---|---|---|---|---|---|

| RawNet2 | 4.60 | 8.08 | 40.67 | 21.71 | 43.04 |

| AASIST | 0.83 | 8.15 | 35.53 | 13.64 | 31.06 |

| XLSR-SLS | 0.56 | 3.04 | 25.43 | 8.84 | 18.92 |

| ProSDD | 0.42 | 3.87 | 16.14 | 3.70 | 9.54 |

(b) Trained on ASVspoof 2024

| Model | ASV 2019 | ASV 2021 | ASV 2024 | EmoFake | EmoSpoof |

|---|---|---|---|---|---|

| RawNet2 | 24.75 | 25.59 | 43.61 | 49.49 | 27.13 |

| AASIST | 23.16 | 22.74 | 25.77 | 62.71 | 15.19 |

| XLSR-SLS | 27.00 | 26.54 | 39.62 | 58.57 | 25.92 |

| ProSDD | 19.04 | 18.08 | 7.38 | 25.06 | 11.96 |

(c) Ablation Study — Trained on ASVspoof 2019 LA

MP = supervised masked prediction objective. "w/o MP" removes masked prediction in both stages. "w/o Stage I" removes real-only prosodic pretraining while retaining MP in Stage II.

| Model | ASV 2019 | ASV 2021 | ASV 2024 | EmoFake | EmoSpoof |

|---|---|---|---|---|---|

| w/o MP | 6.78 | 25.18 | 28.12 | 14.02 | 10.02 |

| w/o Stage I | 5.14 | 7.83 | 15.55 | 6.37 | 15.02 |

| ProSDD | 0.42 | 3.87 | 16.14 | 3.70 | 9.54 |

Key Findings

- Expressive robustness:

- 2019 training: ProSDD achieves ~58% relative EER reduction on EmoFake and ~50% on EmoSpoof vs. the strongest baseline (XLSR-SLS).

- 2024 training: Gains on emotional benchmarks are even larger, with ProSDD substantially outperforming all baselines. The EmoFake setting is particularly challenging, as it contains only voice conversion samples while training uses TTS-only data — yet ProSDD remains robust under this cross-attack mismatch.

Under both training settings, ProSDD shows strong improvements over baselines, especially on ASVspoof 2024, whose expressive synthesis data aligns well with ProSDD's prosody-driven strategy.

- Standard benchmarks: Performance gains on emotional datasets do not compromise standard benchmark accuracy. Under 2019 training, ProSDD remains competitive on ASVspoof 2019 and 2021; under 2024 training, it surpasses all baselines on both benchmarks.

- Ablation: Removing supervised masked prediction substantially degrades performance across standard and emotional datasets. Retaining masked prediction only in Stage II (without real-only pretraining) improves stability but fails to ensure consistent cross-dataset generalization. The full two-stage framework yields the most stable and consistently superior performance, highlighting the importance of real-speech prosodic pretraining and joint supervision for improved generalization.